For Stream Cloud especially, we recommend setting an authorization token in Sources -> Splunk TCP -> Auth Tokens so that your receiver will accept only your traffic. Stream can ingest the native Splunk2Splunk (S2S) protocol directly. Splunk Forwarder (Universal or Heavy) to Splunk TCP Restart fluentd after putting it in place. This config snippet will send all logs to the HEC endpoint. You’ll need to install the splunk_hec fluentd mod if you don’t already have it, like so: sudo gem install fluent-plugin-splunk-hec. We have the same caveats and pre-reqs as for Fluent Bit above, but the config is a bit different. Įvent_source logs_from_fluentd Fluentd to HEC Fluent Bit settings are usually in /etc/td-agent-bit/nf.

Restart the td-agent-bit service after making the change. With an asterisk (wildcard) value there, we’ll be sending all events to the endpoint. Finally, you’ll want to adjust match as appropriate for your use case. And you’ll need the HEC token from Sources -> Splunk HEC -> input def -> Auth Tokens. You will need the Ingest Address for in_splunk_hec from Cribl.Cloud’s Network page. For this case, we’ll choose the Splunk HEC formatting option. There are many output options for Fluent Bit, and several that would work with Stream. The Stream Cloud offering simply comes with many ready to roll. Also, save your capture to a sample file to work with while you build your Pipelines.įinally, you can use these examples to configure on-prem or self-hosted Stream as well. In every case below, we recommend starting a live capture on the Source in Stream once you activate Stream Cloud, to check and verify that the data is coming in. Sending Data to Stream Cloud from Various Agents We’re assuming you are already using the source in question, and are simply looking for how to add a new destination pointing at Stream. This is not intended to be a ground-up how-to for each source type. The majority are open protocols that many different collection agents can use to send data into Stream. Only a few of the listed inputs reference actual products. There are also 11 ports (20000–20010) that you can define for yourself with new sources. Several ports are predefined and ready to receive data.

After you have the account established, you’ll be presented with a list of input endpoints available for sending data into the service.

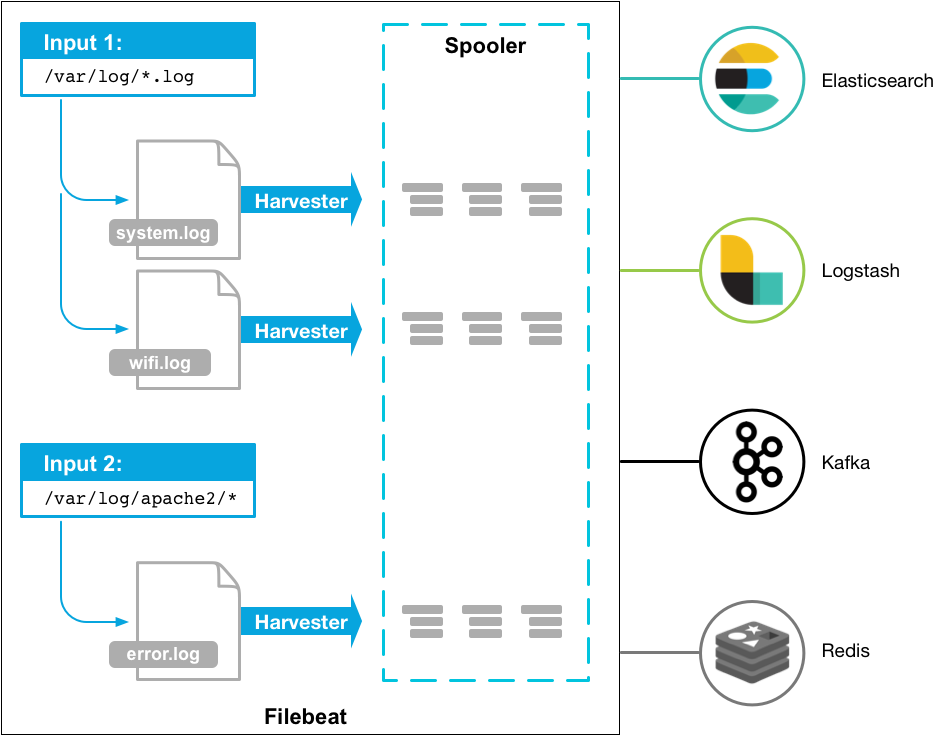

Head over to Cribl.Cloud and get yourself a free account now. In this blog post, we’ll go over how to quickly get data flowing into Stream Cloud from a few common log sources. The service is free for up to 1TB per day and can be upgraded to unlock all the features and support with paid plans starting at $0.17 per GB so you pay for only exactly what you use. Multiline.pattern: '^Īny feedback, suggestions and recommendations are most welcome.Cribl released Stream Cloud to the world in the Spring of 2021, making it easier than ever to stand up a functional o11y pipeline. Other single line events are working expectedly and I am able to visualise them on Kibana. Since Logstash receives the lines of the stacktrace not as a single event but as individual lines, it is leading to a _grokparsefailure at that end, which is completely understandable as FB should club those lines into the same event prior to sending them to Logstash. The issue that I am facing is that even after setting the multiline configuration in Filebeat, I still see the lines of a stacktrace as individual events in Logstash. I am trying to configure multiline events via Filebeat (using S3 input) and consuming them in Logstash. I have had a look at many topics on and StackOverflow, but none seems to have helped. I am doing a PoC on ELK and have come across an issue.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed